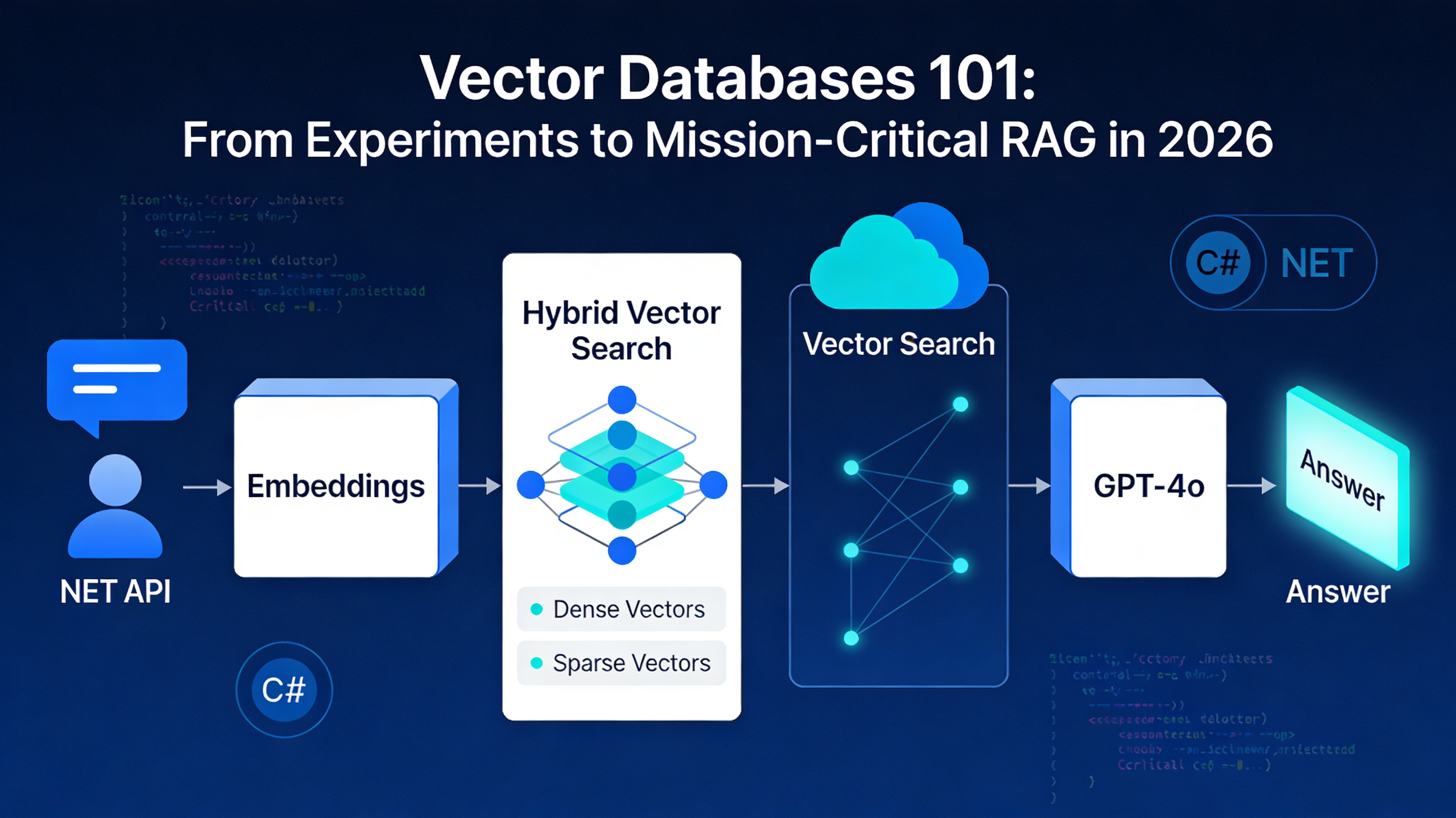

Vector Databases and Semantic Search: Building Modern Web Applications in 2026

Learn how vector databases enable semantic search beyond keyword matching, implement RAG and Agentic RAG patterns with .NET, and compare platforms like Pinecone and Weaviate for production applications.

Search is broken. Your users are searching for "comfortable running shoes for flat feet" but traditional databases return results based on exact keyword matches. If the shoes are tagged with "arch support" instead of "flat feet," they never appear. Vector databases and semantic search fix this fundamental problem by understanding what users actually mean, not just what they type.

This shift from keyword matching to meaning-based retrieval is reshaping how modern applications work. By 2026, this technology is no longer optional for competitive advantage. Whether you are building customer support systems, product discovery platforms, or intelligent document retrieval, understanding vector databases is essential.

Why Traditional Search Falls Short in 2026

Search has not evolved with user expectations. When someone searches for "employment termination without cause," they expect results about wrongful dismissal and severance protections. Traditional keyword search fails here because those exact words may not appear in relevant documents. Exact phrase matching is now a bottleneck for modern applications.

The business impact is measurable. Customer satisfaction drops when search results feel irrelevant. Product discovery systems lose conversions when users cannot find what they need. Support teams waste time searching for documentation instead of solving problems. These are not technical problems alone, they affect revenue and operational efficiency.

Vector databases solve this by encoding meaning into mathematical representations called embeddings. Instead of searching for exact words, the system understands semantic relationships. A query about "smartphone accessories" now surfaces charging cables, protective cases, and screen protectors, even if the user never typed those specific terms.

How Semantic Search Actually Works

Semantic search relies on vector embeddings high-dimensional numerical representations of text that capture meaning. This is not magic. The process is straightforward:

First, both documents and user queries are converted into vectors using embedding models. Modern embedding architectures like Late Interaction models (ColBERT) and multi-modal encoders (CLIP-descendants) encode semantic information into numerical space. Two vectors positioned close together in this space have similar meaning. Two distant vectors represent unrelated concepts.

When a user types a query, the system creates a vector representation of that query. The vector database then finds the closest vectors to that query vector using similarity metrics like cosine similarity or Euclidean distance. The documents corresponding to those closest vectors are returned as results.

This process happens in milliseconds. A modern vector database achieves this speed through specialized indexing structures like HNSW (Hierarchical Navigable Small World), which enable approximate nearest neighbor search at scale. You do not need to compare every document to every query vector, the index shortcuts this process through intelligent graph-based navigation.

Visualizing the Semantic Space

Imagine a three-dimensional space where similar concepts cluster near each other. "Running shoes," "athletic footwear," and "sports sneakers" occupy the same neighborhood. "Formal dress shoes" occupy a completely different region. Your user's query for "comfortable athletic footwear" becomes a point in this space. The database finds the closest points to that query point and returns the corresponding products. This clustering of related concepts is what makes semantic search powerful, it understands meaning, not just keywords.

The RAG Pattern: Connecting Semantic Search to Generative AI

Retrieval-Augmented Generation (RAG) is where vector databases become indispensable infrastructure. RAG solves a critical limitation of large language models: they have a knowledge cutoff date and they hallucinate, they generate plausible-sounding but false information when uncertain.

The basic RAG pattern is elegant:

- Your documents are broken into manageable chunks and converted to embeddings

- These embeddings are stored in a vector database alongside metadata about the source

- When a user asks a question, the system performs semantic search to find relevant documents

- The retrieved documents are fed into an LLM along with the user's question

- The LLM generates a response grounded in actual data instead of generating from memory

The result is an AI system that knows your company's specific information, maintains current knowledge, and can cite where it found information. This approach powers customer support chatbots that answer with real order details, sales assistants that reference current pricing, and research tools that cite peer-reviewed articles.

Agentic RAG: The 2026 Evolution

By 2026, basic RAG is table stakes. The cutting edge has moved to Agentic RAG, where the AI itself decides which tool or database to query based on the user's request. Instead of a single vector database search, the system intelligently routes queries across multiple data sources, vector databases for semantic search, traditional databases for structured data, APIs for real-time information, and specialized indexes for specific domains.

An Agentic RAG system might handle a user asking "What was our highest revenue product in Q3, and what do customers say about it?" by first querying your financial database for revenue data, then retrieving customer sentiment from a vector database of reviews and support tickets, then synthesizing both into a comprehensive answer. The AI decides the routing, not your code. For a deeper exploration of how autonomous AI agents are transforming enterprise operations, see our guide on autonomous AI agents and agentic intelligence.

Multi-Modal Semantic Search: Beyond Text

By 2026, semantic search is no longer text-only. Vector databases now store and search across images, audio, and video embeddings alongside text. This expansion dramatically broadens application possibilities.

E-commerce applications use visual embeddings to enable "search by image", users upload a photo of a product they want, and the system finds similar items in your catalog. Fashion retailers find this capability essential for discovery.

Audio embeddings enable voice search with semantic understanding. Instead of exact phrase matching on transcripts, the system understands the meaning of spoken queries and finds relevant audio content, customer calls, training videos, or podcast episodes based on semantic relevance.

Multi-modal systems combine these embedding types. A product query combining text ("waterproof hiking boots") with an image of a boot style and audio feedback about comfort preferences creates a rich, multi-dimensional search experience.

Comparing Vector Database Platforms for 2026

Choosing the right vector database depends on your specific application requirements. No single platform dominates every use case. Understanding the strengths and trade-offs is essential for production decisions.

The following table provides a quick reference for platform capabilities. Detailed explanations follow below.

| Feature | Pinecone | Weaviate | Qdrant | Milvus |

|---|---|---|---|---|

| Primary Strength | Managed Simplicity | Hybrid Search & Control | Low Latency | High Throughput |

| Best For | Fast Prototyping | Complex Metadata | Interactive Apps | Massive Datasets |

| Deployment | Fully Managed | Managed + Self-Hosted | Managed + Self-Hosted | Self-Hosted |

| Operational Complexity | Low | Medium | Medium | High |

| Query Latency | Less than 120ms (P1) | Variable | 94.5ms | 250ms |

| Throughput (QPS) | Moderate | Moderate | 4.7 QPS | 46.3 QPS |

| Best Vector Volume | Up to 100M+ | 10M-100M | 1M-20M | 50M+ |

| Hybrid Search | No | Yes | Limited | No |

| Metadata Filtering | Standard | Advanced | Excellent | Good |

| Cost Model | Premium/Managed | Mid-Range | Mid-Range | Infrastructure-Only |

| Multi-Modal Support | Yes | Yes | Growing | Limited |

Pinecone: Managed Simplicity with Premium Performance

Pinecone is the path of least resistance to production. It handles everything as a fully managed service scaling, replication, indexing, and infrastructure. You focus on application logic, not database operations.

Performance is consistently high. Pinecone's performance-optimized pods (P1) maintain search latency under 120 milliseconds even at scale. Storage-optimized pods (S1) handle more than 100 million vectors while keeping searches under 500 milliseconds. These are real-world production numbers, not theoretical benchmarks.

The trade-off is flexibility and cost. You cannot fine-tune indexing strategies or control memory allocation at a granular level. You pay premium pricing for convenience and predictable performance.

Pinecone achieves 90 to 99 percent recall rates consistently. Recall measures what percentage of truly relevant results are returned. This consistency matters for applications where missing relevant results damages user experience.

Use Pinecone if your priority is getting to production quickly, your team prefers not managing infrastructure, and you can absorb the premium pricing for simplicity and reliability.

Weaviate: Flexible Hybrid Search with Operational Control

Weaviate emphasizes user control over automatic management. You can choose between fully managed cloud hosting or self-hosted deployment, giving you the flexibility to optimize for your specific constraints.

Weaviate pioneered hybrid search combining keyword-based BM25 with vector similarity. When a query contains rare technical terms or specific names, keyword search retrieves relevant results that pure semantic search might miss. By weighting keyword matches (using an alpha parameter) against vector similarity scores, you can balance semantic understanding with keyword precision.

This flexibility comes with complexity. Hybrid search parameters require tuning for your specific use case. Set alpha wrong and your results may be worse than either method alone. Your team needs deeper engagement with indexing configuration and performance optimization.

Weaviate is excellent for applications where you need operational control, hybrid search capabilities, and flexibility in deployment. Organizations with strong infrastructure teams and requirements for self-hosting benefit from this approach.

Qdrant: Real-Time Performance for Interactive Applications

Qdrant targets applications where latency directly impacts user experience. Individual query latency of 94.5 milliseconds is approximately 2.6 times faster than Milvus at 250 milliseconds. For real-time chatbots, recommendation systems, and interactive search interfaces, this difference is significant.

Qdrant also excels at metadata filtering. If you need to search within documents tagged with specific dates, categories, or user permissions, Qdrant handles these constraints efficiently while maintaining low latency.

The limitation appears as data volume grows. Benchmarks show performance degradation beyond 10 million vectors. At 50 million vectors, Qdrant achieves 41.47 queries per second while competitors maintain higher throughput. For applications with massive vector collections, careful testing at your expected scale is necessary.

Choose Qdrant for interactive applications prioritizing low-latency response times, rich metadata filtering requirements, and datasets under 10-20 million vectors.

Milvus: Throughput and Bulk Operations

Milvus approaches the problem differently. Instead of optimizing for individual query latency, Milvus optimizes for throughput and concurrent operations. It achieves 46.33 queries per second compared to Qdrant's 4.7 QPS, a 10-fold advantage.

Data ingestion speed demonstrates this philosophy. Milvus inserts 12 million vectors in 12 seconds. Qdrant requires 41 seconds. For applications constantly updating their vector database with new content, this difference translates to fresher search results and lower operational costs.

Milvus 2.6 brings significant improvements including 72 percent lower memory usage with RaBitQ quantization and 4 times faster queries. These performance gains make Milvus increasingly competitive for large-scale deployments.

Milvus is ideal for systems with high concurrency, frequent bulk data ingestion, very large vector collections, and environments where throughput matters more than individual query latency.

Implementing Semantic Search with .NET

Vector database technology is not exclusive to Python or JavaScript. Modern .NET applications can implement semantic search and RAG patterns effectively.

The AWS approach demonstrates this. Using the AWS SDK for .NET with Amazon Bedrock Knowledge Bases, you can build RAG applications that integrate vector search with large language models.

Here is the key pattern using the RetrieveAndGenerate API:

var request = new RetrieveAndGenerateRequest

{

Input = new RetrieveAndGenerateInput { Text = userQuestion },

RetrieveAndGenerateConfiguration = new RetrieveAndGenerateConfiguration

{

Type = RetrieveAndGenerateType.KNOWLEDGE_BASE,

KnowledgeBaseRetrieveAndGenerateConfiguration =

new KnowledgeBaseRetrieveAndGenerateConfiguration

{

KnowledgeBaseId = knowledgeBaseId,

ModelArn = modelId,

RetrievalConfiguration = new KnowledgeBaseRetrievalConfiguration

{

VectorSearchConfiguration =

new KnowledgeBaseVectorSearchConfiguration

{

OverrideSearchType = "HYBRID"

}

}

}

}

};

var result = await agentClient.RetrieveAndGenerateAsync(request);

This approach handles the complexity of chunking documents, generating embeddings, managing the vector database, and orchestrating the retrieval and generation pipeline. You focus on your application logic, not infrastructure details.

.NET 10 Vector Integration: The Game Changer

.NET 10 transforms vector database integration for enterprise developers. Entity Framework Core now includes first-class Vector type support, eliminating the need for custom implementations or third-party wrappers for basic operations. These enhancements are part of the broader performance revolution in .NET 10.

The Microsoft.Extensions.AI abstraction layer provides a unified interface for embeddings, making it as straightforward to swap vector database providers as switching SQL providers. Here is how simple vector operations become:

// Define your data model with vector support

public class Product

{

public int Id { get; set; }

public string Name { get; set; }

[Vector(1536)] // Specify embedding dimension

public Vector Embedding { get; set; }

}

// Configure your DbContext

protected override void OnModelCreating(ModelBuilder modelBuilder)

{

modelBuilder.Entity<Product>()

.HasIndex(p => p.Embedding)

.WithHnsw(); // Use HNSW indexing for fast similarity search

}

// Query with semantic similarity

var similarProducts = await context.Products

.OrderBy(p => EF.Functions.VectorDistance(p.Embedding, queryVector))

.Take(10)

.ToListAsync();

The vector type is now a first-class citizen in the ORM. You can perform semantic searches directly in your LINQ queries, combine vector similarity with traditional WHERE clauses, and handle all vector operations through standard Entity Framework patterns.

This integration means .NET teams are no longer second-class citizens in the AI space. The platform-native support for vectors puts .NET development on equal footing with Python and JavaScript ecosystems.

Real-World Applications: Where Semantic Search Delivers Value

Semantic search is not theoretical. Organizations across industries are deploying these systems to solve concrete business problems.

Product Discovery and E-Commerce

Customers shop using natural language, not database schema. When someone searches "moisture-wicking athletic wear for hot climates," your system needs to understand that this describes breathable fabrics suitable for tropical environments. Traditional search cannot connect these concepts.

A semantic search system creates embeddings for every product description, capturing attributes like material, climate suitability, and activity type. When the customer searches, the system finds products where the embedding space reflects their actual needs. Conversion rates improve because customers find what they seek. Return rates decrease because results match expectations.

Visual embeddings multiply this capability. Customers can upload or describe a product aesthetic they want, and the system finds structurally similar items even if the keywords differ completely.

Customer Support Automation

Customer support is expensive. Response time is critical. Information is scattered across documentation, FAQs, knowledge bases, and CRM systems. A support agent answering a question about order status must search multiple systems to provide accurate information.

RAG-based support chatbots retrieve relevant information in real time. When a customer asks "Why has my order not shipped yet?", the system searches internal knowledge bases for order status information, shipping policies, and common delays. It retrieves the specific customer's order details from the CRM. It generates a personalized response explaining the status and next steps. What previously required a human agent answering from memory now happens automatically with accurate, current information.

In 2026, the best support systems are moving to Agentic RAG, where the chatbot decides whether to pull from FAQs, ticket histories, product documentation, or real-time inventory systems based on the query intent. This intelligence dramatically improves resolution rates and customer satisfaction.

Document Retrieval and Knowledge Management

Organizations accumulate vast document collections. Enterprise search typically relies on keyword-based indexes that miss relevant information when terminology differs. Legal professionals searching for "wrongful termination" miss articles on "severance agreements" because exact keyword matching fails to recognize semantic overlap.

Vector databases enable comprehensive document retrieval. A researcher asking "What does our documentation say about compliance for financial services?" retrieves relevant documents even if they use different terminology like "regulatory requirements," "industry standards," or "audit procedures." All documents addressing the underlying concepts appear as relevant results.

Recommendation Systems

Beyond explicit search, vector embeddings power recommendation systems. Products with similar embeddings are considered related. Users with similar purchase history embeddings receive similar recommendations. This semantic understanding drives discovery, cross-selling, and personalization at scale.

Performance Benchmarks: What to Expect in Production

Semantic search performance involves multiple dimensions: latency (how fast results return), throughput (how many queries per second), recall (what percentage of relevant results are returned), and resource efficiency (CPU, memory, cost).

Testing reveals substantial improvements over traditional approaches. Vector-based anomaly detection achieved 94.6 percent accuracy compared to 62.3 percent for rule-based methods. The same vector database approach returned results in 14 milliseconds versus 242 milliseconds for traditional relational database scans.

Storage efficiency matters at scale. Advanced quantization techniques compress 1024-dimensional vectors to 1-bit representations while maintaining search quality. This reduces storage requirements dramatically without sacrificing recall.

Choosing the right platform means testing your specific use case at your expected scale. If you are considering supporting 50 million vectors with real-time interactive requirements, benchmark your top candidates at that volume. Theoretical performance sometimes diverges from practical results.

Common Mistakes When Implementing Vector Databases

Organizations new to vector databases typically make predictable mistakes that impact cost and performance.

First mistake: Ignoring hybrid search. Pure semantic search struggles with rare keywords, technical terms, and exact name matches. Combining keyword search with semantic search handles edge cases that either method alone misses. Implement hybrid search early.

Second mistake: Poor chunking strategies. How you split documents into vectors affects quality. Chunks too small lose context. Chunks too large make embeddings less precise. Experiment with chunk sizes and overlap strategies before production deployment.

Third mistake: Insufficient metadata. Store relevant metadata with embeddings. Timestamps, document sources, access permissions, and categorization enable filtering that improves search quality and enables security controls.

Fourth mistake: Treating vectors as static. As your products, documents, or understanding evolves, your vectors must update. Stale embeddings generate stale results. Plan for continuous embedding regeneration and vector database updates.

Fifth mistake: Underestimating embedding quality. The embedding model determines search quality. A better model costs more but returns dramatically better results. For 2026, modern models like Late Interaction (ColBERT) outperform traditional approaches like BERT. Testing embedding models against your use case is essential. What works for general-purpose search may not work for specialized domains.

Sixth mistake: Ignoring PII and sensitive data. Vector embeddings can inadvertently encode personally identifiable information. When scaling semantic search, implement PII redaction at the embedding layer. Sensitive data should never become part of your embedding space, this is a major 2026 governance hurdle.

What to Do Next: Your 2026 Roadmap

The landscape of semantic search and vector databases is maturing rapidly. 2026 is the inflection point where these technologies become expected infrastructure, not nice-to-have features.

Start by assessing where semantic search delivers value in your applications. Where do users struggle with keyword search? Where do support teams waste time searching for information? Where do recommendation systems feel generic?

Run a pilot project. Semantic search is straightforward to prototype. You can build a working proof of concept in days, not months. Start with a non-critical application or internal tool. Validate that semantic search actually solves your problem before committing to production deployment.

Choose a vector database platform aligned with your infrastructure preferences and operational capabilities. For organizations prioritizing speed to production, Pinecone simplifies the decision. For teams with infrastructure expertise, Weaviate or Qdrant provide more flexibility. For systems handling massive scale with high concurrency, Milvus delivers throughput.

Implement hybrid search from day one. Combining semantic and keyword approaches handles more use cases correctly. The small additional complexity in implementation prevents problems later.

Invest in your embedding model. The quality of your embeddings determines the quality of your search. For specialized domains, consider fine-tuning embedding models on domain-specific data. For 2026, evaluate modern Late Interaction models like ColBERT and multi-modal encoders alongside traditional options.

Consider Small Language Models for privacy-conscious RAG. In 2026, you do not always need GPT-4 or Claude for RAG applications. Phi-4 or Llama-4-mini running locally on your infrastructure delivers excellent results while maintaining complete privacy over sensitive data. For organizations handling customer data, healthcare information, or financial records, local small language models are increasingly the right architectural choice.

Finally, connect semantic search to generative AI through RAG and Agentic RAG patterns. Vector databases alone retrieve information. Combined with language models and agent frameworks that decide which tools to use, they enable intelligent applications that understand context, route queries intelligently, and cite sources accurately. This is the 2026 standard for intelligent applications.

Key Takeaways

Vector databases are foundational infrastructure for modern web applications. They enable semantic search that understands user intent instead of matching keywords. They power RAG patterns that connect generative AI to current, domain-specific information. By 2026, Agentic RAG systems that intelligently route queries across multiple data sources represent the cutting edge. They are no longer experimental technology, they are production-ready infrastructure.

Pinecone offers managed simplicity with premium performance. Weaviate provides flexible control and hybrid search capabilities. Qdrant delivers real-time performance for interactive applications. Milvus excels at throughput and concurrent workloads. The choice depends on your specific requirements.

.NET applications can implement semantic search effectively using AWS Bedrock, Entity Framework Core 10, and the new Microsoft.Extensions.AI abstraction layer. The platform now offers first-class vector support with minimal boilerplate.

Multi-modal embeddings extend semantic search beyond text to images, audio, and video. These capabilities open new application possibilities in 2026.

Privacy and governance matter. Implement PII redaction at the embedding layer and consider local small language models for applications handling sensitive data.

The real opportunity is in application and business outcomes. Better search improves user experience and conversion rates. Smarter customer support reduces costs and increases satisfaction. Automated document retrieval enables faster decisions. These are not technical achievements, they are business achievements enabled by modern vector database technology.

Ready to Implement Semantic Search?

Hrishi Digital Solutions specializes in building AI-powered applications for enterprise clients. We combine semantic search and RAG patterns with cloud-native architecture to solve real business problems. Our enterprise web application development services deliver production-ready AI implementations.

Whether you are migrating legacy applications to modern platforms or building new AI-native systems, our team understands the technical and business dimensions of semantic search implementation.

Book a Free 30-Minute Strategy Consultation

Let us review your application landscape and identify where semantic search delivers the highest value.

Hrishi Digital Solutions

Expert digital solutions provider specializing in modern web development, cloud architecture, and AI integration for enterprise applications.

Contact Us →